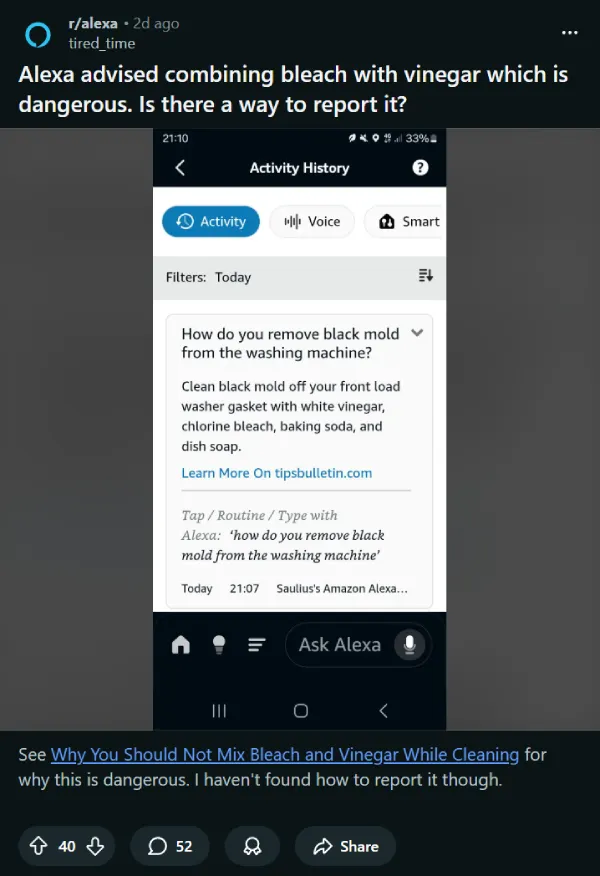

A Reddit user on r/alexa got more than they bargained for after asking Amazon’s Alexa how to remove black mold from a washing machine. The AI came back with this: “Clean black mold off your front load washer gasket with white vinegar, chlorine bleach, baking soda, and dish soap.” Alexa also pointed to tipsbulletin.com as its source.

The problem comes down to one word: “and.” Mixing bleach and vinegar can release chlorine gas, which irritates the eyes, nose, throat, and lungs, and at higher concentrations can be fatal. It’s not the kind of mistake you want to make in a closed laundry room.

The user, u/tired_time, posted about it on the r/alexa subreddit, flagging the response as dangerous and asking how to report it to Amazon. The post picked up steam pretty quickly, drawing a range of reactions from other Alexa users.

Opinion in the thread was split. Some argued Alexa was just listing cleaning options, not telling anyone to combine them. Others pushed back hard, saying the phrasing was too easy to misread. One commenter put it simply: “and” means combine, “or” means choose. That’s not a minor distinction when one option involves chlorine gas.

Looking at the TipsBulletin article Alexa cited, the source page actually lists bleach and vinegar as separate methods, each with its own set of steps and precautions. The source isn’t wrong. Alexa just flattened multiple individual methods into one sentence, and that’s where things went sideways.

It’s also worth knowing that TipsBulletin even has a baking soda and vinegar method listed together as a safe combo for tackling stubborn mold on the washer gasket, so the “and” confusion is understandable given how the article is structured. But bleach and vinegar in the same breath? That’s a different story.

As for reporting the issue, several users in the thread pointed out that you can flag it through the Alexa app. Go to Settings, open Activity, head to Voice History, find the interaction, and tap the thumbs-down icon. You can also get to it via Help and Feedback in the app and submit a note directly to Amazon.

This is not the first time Alexa has drawn heat for answers that miss context or surface the wrong part of a source: back in 2021, Amazon updated Alexa after reports it suggested a dangerous “challenge” to a child by pulling wording from the web without the surrounding warning context. More recently, UK fact-checking group Full Fact documented cases where Alexa served incorrect answers while attributing them to Full Fact’s own fact checks.

This is a good example of how AI assistants can strip context when condensing sourced information. Most of the time that just means a vague or slightly off answer. Last year, I covered some big bloopers by Google’s AI Overviews for things like telling someone it’s fine to leave a dog in a hot car, to claiming a World War II veteran died during a war that ended 45 years before his actual death. When it involves household chemicals, though, the consequences can be a lot more serious.

Featured image generated with AI

TechIssuesToday primarily focuses on publishing 'breaking' or 'exclusive' tech news. This means, we are usually the first news website on the whole Internet to highlight the topics we cover daily. So far, our stories have been picked up by many mainstream technology publications like The Verge, Macrumors, Forbes, etc. To know more, head here.